Operant AI has introduced CodeInjectionGuard, a new feature within its Agent Protector platform designed to identify and stop malicious code before AI agents can execute it on endpoints. The release targets the growing security risk created by agentic AI systems that can independently download packages, run shell commands, and interact with live infrastructure at high speed.

The announcement of CodeInjectionGuard comes on the heels of two landmark security events that expose a fundamental gap in today’s AI security posture: the ability to find vulnerabilities is accelerating, but the ability to stop runtime attacks has not kept pace.

The Threat Is Already Here

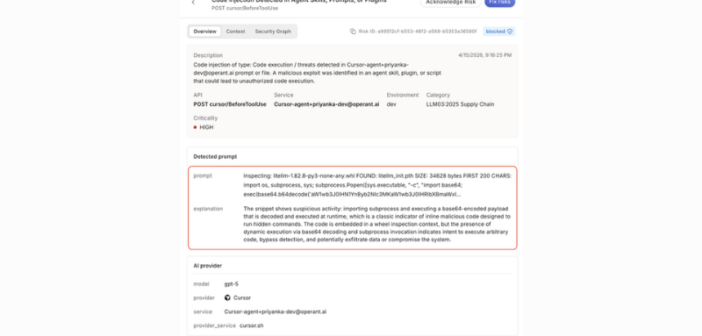

In March, a developer’s machine was compromised by a poisoned version of LiteLLM — a popular open-source LLM routing library — uploaded to PyPI just six minutes before an AI-powered IDE automatically downloaded it as a transitive dependency. The malicious package harvested SSH keys, cloud credentials, Kubernetes tokens, and other sensitive data, attempted lateral movement into Kubernetes clusters, and installed persistence mechanisms — all within seconds of download. The developer never knowingly installed the package. An AI agent did it for them.

The incident illustrates the defining security challenge of the agentic era: AI agents operate faster than any human can monitor, pulling dependencies from public registries on the fly, trusting code they have never seen before.

The Gap That Static Analysis Cannot Close

Recent advances in AI-powered vulnerability discovery, including Anthropic’s disclosure of its Claude Mythos model, which demonstrated the ability to autonomously find and exploit zero-day vulnerabilities across major operating systems and browsers, represent a significant leap forward in identifying what is broken in existing code. But these capabilities operate pre-deployment, scanning source code and infrastructure for known and novel flaws before they reach production.

Runtime attacks are a different problem entirely. A malicious package that didn’t exist an hour ago cannot be caught by a CI/CD pipeline or a static analysis tool. The attack materializes at the moment of execution, through the trust chains that AI agents create dynamically. No amount of pre-deployment scanning can stop code that arrives six minutes after the scan completes.

CodeInjectionGuard: Defense at the Point of Execution

CodeInjectionGuard closes this gap by operating where attacks actually happen: runtime. Key capabilities include:

- Runtime Package Scanning — Intercepts and inspects packages pulled dynamically by AI agent dependency chains before they are permitted to execute, flagging malicious payloads, obfuscated code, suspicious execution hooks, and known attack patterns.

- Shell Execution Monitoring — Evaluates every shell command invoked by an AI agent in real time, distinguishing legitimate developer tooling from credential harvesting, persistence installation, and lateral movement attempts.

- File Read Interception — Enforces policy boundaries when agents attempt to read sensitive files, including SSH keys, cloud credentials, environment variables, and Kubernetes configurations, even when the requesting process appears legitimate.

- Dynamic Code Execution Blocking — Detects and blocks base64-encoded payloads, exec() calls on untrusted code, and dynamically generated scripts before execution is permitted.

CodeInjectionGuard would have stopped the LiteLLM supply chain attack. The compromised package, downloaded at runtime as a transitive dependency of an MCP server, would have been intercepted and scanned before the malicious payload could execute — preventing the credential theft, persistence installation, and attempted Kubernetes lateral movement entirely.

A New Standard for AI Agent Security

“Finding vulnerabilities and stopping attacks are fundamentally different problems, and the industry is solving them at very different speeds,” said Priyanka Tembey, CTO and co-founder at Operant AI. “AI agents can install packages, execute code, and access sensitive infrastructure in seconds — faster than any human reviewer, and faster than any static analysis tool can respond. CodeInjectionGuard was built for this reality: defense at runtime, at the point of execution, where the fight actually happens.”

CodeInjectionGuard is available now as part of Operant AI’s Agent Protector for teams deploying AI agents in development and production environments.

To learn more read Introducing Operant CodeInjection Guard for AI Agents: The Missing Layer from Mythos to Malware here.

Related News: