Amazon Web Services, Inc. (AWS) announced the general availability of Amazon Elastic Compute Cloud (Amazon EC2) P4d instances, the next generation of GPU-powered instances.

P4d instances feature eight NVIDIA A100 Tensor Core GPUs and 400 Gbps of network bandwidth (16x more than P3 instances). Using P4d instances with AWS’s Elastic Fabric Adapter (EFA) and NVIDIA GPUDirect RDMA (remote direct memory access), customers are able to create P4d instances with EC2 UltraClusters capability.

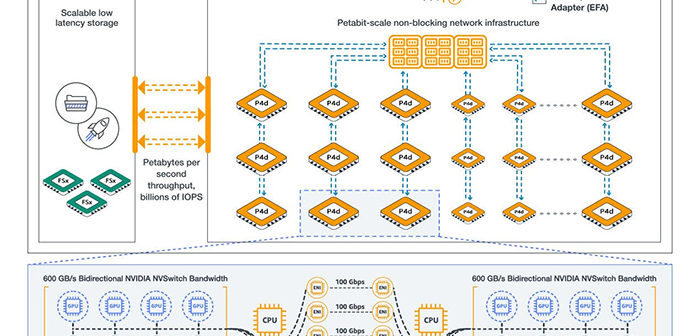

EC2 UltraClusters allow customers to scale P4d instances to over 4,000 A100 GPUs by making use of AWS-designed non-blocking petabit-scale networking infrastructure integrated with Amazon FSx for Lustre high performance storage, offering on-demand access to supercomputing-class performance to accelerate machine learning training and HPC.

Data scientists and engineers are continuing to push the boundaries of machine learning by creating larger and more-complex models that provide higher prediction accuracy for a broad range of use cases, including perception model training for autonomous vehicles, natural language processing, image classification, object detection, and predictive analytics. Training these complex models against large volumes of data is a very compute, network, and storage intensive task and often takes days or weeks. Customers not only want to cut down on the time-to-train their models, but they also want to lower their overall spend on training. Collectively, long training times and high costs limit how frequently customers can train their models, which translates into a slower pace of development and innovation for machine learning.

The increased performance of P4d instances speeds up the time to train machine learning models by up to 3x (reducing training time from days to hours) and the additional GPU memory helps customers train larger, more complex models. As data becomes more abundant, customers are training models with millions and sometimes billions of parameters, like those used for natural language processing for document summarization and question answering, object detection and classification for autonomous vehicles, image classification for large-scale content moderation, recommendation engines for e-commerce websites, and ranking algorithms for intelligent search engines—all of which require increasing network throughput and GPU memory. P4d instances feature 8 NVIDIA A100 Tensor Core GPUs capable of up to 2.5 petaflops of mixed-precision performance and 320 GB of high bandwidth GPU memory in one EC2 instance.

AWS says P4d instances are the first in the industry to offer 400 Gbps network bandwidth with Elastic Fabric Adapter (EFA) and NVIDIA GPUDirect RDMA network interfaces to enable direct communication between GPUs across servers for lower latency and higher scaling efficiency, helping to unblock scaling bottlenecks across multi-node distributed workloads. Each P4d instance also offers 96 Intel Xeon Scalable (Cascade Lake) vCPUs, 1.1 TB of system memory, and 8 TB of local NVMe storage to reduce single node training times.

By more than doubling the performance of previous generation of P3 instances, P4d instances can lower the cost to train machine learning models by up to 60%, providing customers greater efficiency over expensive and inflexible on-premises systems. HPC customers will also benefit from P4d’s increased processing performance and GPU memory for demanding workloads like seismic analysis, drug discovery, DNA sequencing, materials science, and financial and insurance risk modeling.

P4d instances are also built on the AWS Nitro System, AWS-designed hardware and software that has enabled AWS to deliver an ever-broadening selection of EC2 instances and configurations to customers, while offering performance that is indistinguishable from bare metal, providing fast storage and networking, and ensuring more secure multi-tenancy. P4d instances offload networking functions to dedicated Nitro Cards that accelerate data transfer between multiple P4d instances. Nitro Cards also enable EFA and GPUDirect, which allows for direct cross-server communication between GPUs, facilitating lower latency and better scaling performance across EC2 UltraClusters of P4d instances. These Nitro-powered capabilities make it possible for customers to launch P4d in EC2 UltraClusters with on-demand and scalable access to over 4,000 GPUs for supercomputer-class performance.

“The pace at which our customers have used AWS services to build, train, and deploy machine learning applications has been extraordinary. At the same time, we have heard from those customers that they want an even lower cost way to train their massive machine learning models,” said Dave Brown, Vice President, EC2, AWS. “Now, with EC2 UltraClusters of P4d instances powered by NVIDIA’s latest A100 GPUs and petabit-scale networking, we’re making supercomputing-class performance available to virtually everyone, while reducing the time to train machine learning models by 3x, and lowering the cost to train by up to 60% compared to previous generation instances.”

Customers can run containerized applications on P4d instances with AWS Deep Learning Containers with libraries for Amazon Elastic Kubernetes Service (Amazon EKS) or AmazonElastic Container Service (Amazon ECS). For a more fully managed experience, customers can use P4d instances via Amazon SageMaker, providing developers and data scientists with the ability to build, train, and deploy machine learning models quickly. HPC customers can leverage AWS Batch and AWS ParallelCluster with P4d instances to help orchestrate jobs and clusters efficiently. P4d instances support all major machine learning frameworks, including TensorFlow, PyTorch, and Apache MXNet, giving customers the flexibility to choose the framework that works best for their applications. P4d instances are available in US East (N. Virginia) and US West (Oregon), with availability planned for additional regions soon. P4d instances can be purchased as On-Demand, with Savings Plans, with Reserved Instances, or as Spot Instances.